If you build with AI APIs — ModelsLab, OpenAI, Replicate, Runway — you've hit this wall. You open Claude Code, start wiring up your MCP tools, and 30 minutes later the session is sluggish. Context window: 40% gone. You haven't even written the hard part yet.

A senior software engineer just open-sourced the fix. MCP Context Mode is an MCP server that sandboxes tool outputs before they hit your context window. Result: 315 KB of raw tool output becomes 5.4 KB. Sessions that used to die at 30 minutes now run for 3 hours. It hit HN front page with 115 points this week.

Here's what's happening, why it matters for AI API developers, and how to set it up today.

Why Your Context Window Dies Fast with MCP

MCP became the standard way for AI agents to call external tools — your image API, your database, your file system. But each tool interaction eats context from both directions:

Incoming: Tool definitions. With 81+ tools active, Cloudflare found this alone consumes 143K tokens (72% of a 200K window) before your first message.

Outgoing: Raw tool output. This is the part MCP Context Mode fixes.

The raw output problem is brutal for AI API developers specifically:

A Playwright snapshot of your UI: 56 KB

20 GitHub issues from your repo: 59 KB

One access log (500 requests): 45 KB

An analytics CSV (500 rows): 85 KB

Run a test suite while debugging your ModelsLab integration and you can burn 200+ KB in a single session. The model starts forgetting context. Code quality drops. You restart.

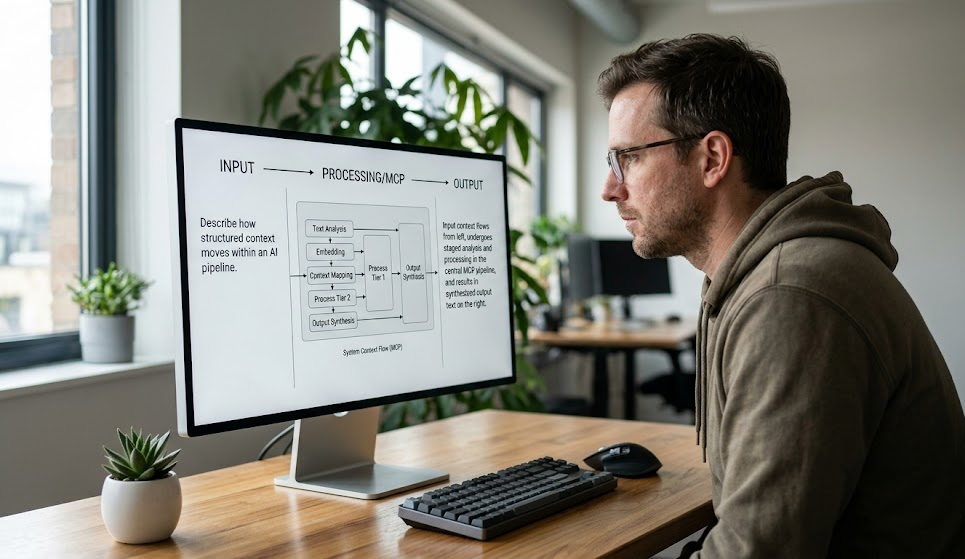

How MCP Context Mode Fixes This

Context Mode sits between Claude Code and the tool outputs. Instead of dumping raw data into the conversation, it runs a sandbox subprocess that processes the output and returns only a structured summary.

The numbers after processing:

Playwright snapshot: 56 KB → 299 bytes

GitHub issues (20): 59 KB → 1.1 KB

Access log (500 requests): 45 KB → 155 bytes

Analytics CSV (500 rows): 85 KB → 222 bytes

Git log (153 commits): 11.6 KB → 107 bytes

Full session benchmark: 315 KB → 5.4 KB. Context remaining after 45 minutes: 99% instead of 60%.

You don't change how you work. A PreToolUse hook automatically routes outputs through the sandbox. The raw data — log files, API responses, snapshots — never enters the conversation.

Why This Matters Specifically for ModelsLab API Development

When you're building an image generation pipeline with ModelsLab's API, a typical Claude Code session involves:

Fetching API documentation pages

Calling test endpoints and reading JSON responses

Checking error logs

Running browser tests against your UI

Reading multiple source files

Each of these dumps raw content into context. A single ModelsLab API response for a batch generation request can be several KB of JSON. Multiply that by a debugging session and you're burning context fast.

With Context Mode, the sandbox extracts the signal — status codes, key fields, error messages — and discards the noise. Your 200K token window lasts the full session.

The Sandbox: 10 Language Runtimes, Zero Context Bleed

The execution sandbox supports 10 languages: JavaScript, TypeScript, Python, Shell, Ruby, Go, Rust, PHP, Perl, R. Bun is auto-detected for 3-5x faster JS/TS execution.

Authenticated CLIs work through credential passthrough — gh, aws, gcloud, kubectl, docker. The subprocess inherits your environment variables and config paths without exposing them to the conversation context.

Each execute call spawns an isolated subprocess. Scripts can't access each other's memory or state. Raw data never leaves the sandbox.

The Knowledge Base: BM25 Search Over Your Docs

Context Mode also includes a local knowledge base. The index tool chunks markdown content by headings (keeping code blocks intact), stores it in SQLite FTS5, and uses BM25 ranking for search.

For API developers, this means you can index the ModelsLab documentation locally and search it without the full docs ever hitting context. fetch_and_index extends this to URLs — it fetches a page, converts HTML to markdown, chunks it, and indexes it. The raw page never enters the conversation.

Porter stemming means "generates", "generating", and "generated" all match the same stem. You get exact code blocks with heading hierarchy — not summaries, the actual indexed content.

Installation (2 Minutes)

Plugin Marketplace install (recommended — includes auto-routing hooks and slash commands):

/plugin marketplace add mksglu/claude-context-mode/plugin install context-mode@claude-context-mode

MCP-only install (just the tools, no hooks):

claude mcp add context-mode -- npx -y context-mode

Restart Claude Code. Done. The PreToolUse hook activates automatically — no changes to your workflow required.

Benchmarks Across Real Developer Scenarios

The author validated across 11 real-world scenarios: test triage, TypeScript error diagnosis, git diff review, dependency audit, API response processing, CSV analytics. All scenarios produced under 1 KB output.

The compound effect is significant: session time before slowdown goes from ~30 minutes to ~3 hours with the same 200K token window. You get 6x longer productive coding sessions without upgrading your model or paying for more tokens.

Open Source, MIT License

The project is open source under MIT license: github.com/mksglu/claude-context-mode. Built by Mert Köseoğlu, who runs the MCP Directory & Hub (100K+ daily requests) and saw this pattern across every MCP server that shipped: everyone builds tools that dump raw data into context, nobody was solving the output side.

Cloudflare solved the input side (tool definitions, 99.9% compression). Context Mode solves the output side. Together, they're the foundation for production-grade AI agent development.

Using ModelsLab APIs in Longer AI Agent Sessions

If you're building AI agents that call ModelsLab's image, video, or audio generation APIs, you're doing exactly the kind of work Context Mode was built for. Long-running agent sessions with multiple API calls, log inspection, and iterative debugging are where context burn hurts most.

ModelsLab's REST APIs return structured JSON — exactly the kind of output that benefits from sandbox extraction. Instead of the full response entering context, Context Mode extracts the fields your agent actually needs (image URL, job ID, status) and drops the metadata noise.

Try the APIs at modelslab.com — text-to-image, text-to-video, audio generation, and 100+ model endpoints available via a single API key.