What Is Karpathy's Autoresearch?

On the night of March 7, 2026, Andrej Karpathy pushed a 630-line Python script to GitHub and went to sleep. By morning, his AI agent had run 50 experiments, discovered a better learning rate, committed the proof to git, and moved on to the next hypothesis — all without a single human instruction in between.

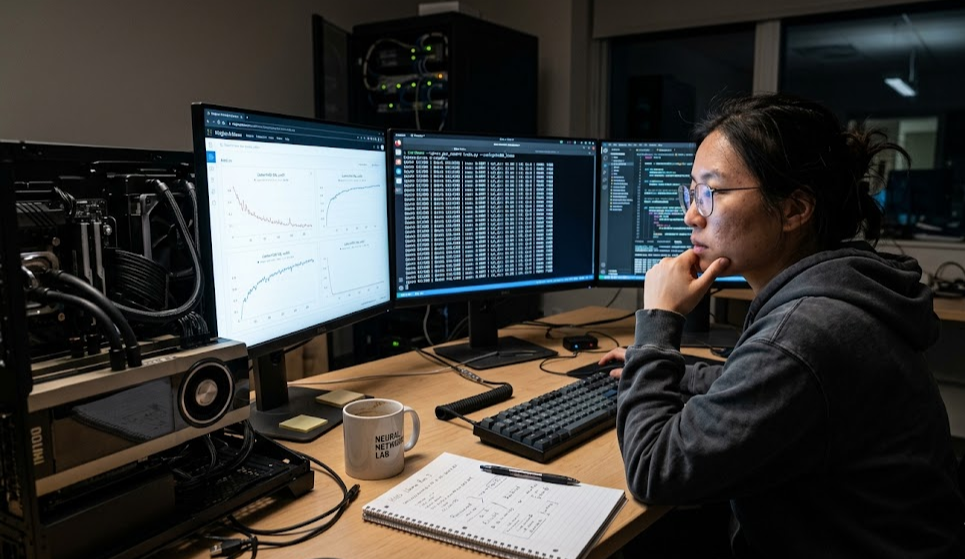

The project is called autoresearch, and it reframes how ML research gets done. Instead of a human sitting at a terminal cycling through hyperparameter changes one at a time, an AI agent takes over the execution loop: it modifies code, trains for exactly 5 minutes, checks if validation loss improved, keeps or discards the change, and repeats. You set the research direction before bed. You read the results over coffee.

Karpathy described the dynamic with a line that stuck with the community: "One day, frontier AI research used to be done by meat computers in between eating, sleeping, having other fun." Autoresearch takes that observation literally — replacing the tedious execution cycle while keeping humans in the strategic seat.

Within a week of release, the repo had crossed 33,000 GitHub stars. By early April 2026, it reached over 66,000 stars with 9,600 forks, making it one of the fastest-growing ML research tools in recent memory.

How the Agent Loop Works: A Nine-Step Ratchet

The autoresearch workflow operates as a ratchet — it only moves forward. Each iteration follows nine steps:

| Step | Action | Details |

|---|---|---|

| 1 | Read research priorities | Agent reads program.md for direction |

| 2 | Examine current state | Reviews train.py and recent results.tsv |

| 3 | Propose hypothesis | Suggests an architecture or optimizer change |

| 4 | Modify code | Edits train.py and commits to a git branch |

| 5 | Train | Runs training for exactly 5 minutes (wall-clock) |

| 6 | Handle failures | Logs crashes automatically and reverts |

| 7 | Evaluate | Measures val_bpb, records in results.tsv |

| 8 | Keep or discard | Keeps commit if val_bpb improved; otherwise git reset HEAD~1 |

| 9 | Repeat | Returns to step 1 |

This produces roughly 12 experiments per hour. An overnight run on a single GPU covers 80 to 100 experiments — a volume that would take a human researcher several days of manual cycling.

The system maintains a single lineage rather than a population, using git history as its research memory. The agent reads its own commit log and results.tsv to calibrate future proposals, building on validated directions and avoiding paths that already failed.

The Three Files That Matter

Autoresearch is deliberately minimal. The entire system revolves around three files:

prepare.py — The Immutable Foundation

This file handles one-time data preparation: downloading the FineWeb-Edu training dataset, training a BPE tokenizer (8,192 tokens), and defining the evaluation harness. The agent never modifies prepare.py. It provides the fixed ground truth against which all experiments are measured.

train.py — The Agent's Sandbox

At 630 lines, train.py contains the full GPT model definition, a Muon + AdamW optimizer configuration, and the complete training loop. Everything is fair game for the agent to modify: architecture depth and width, attention mechanisms, activation functions, learning rate schedules, batch sizes, weight initialization, and optimizer hyperparameters.

The single-file constraint is intentional. As Karpathy noted: keeping everything in one file makes the scope manageable and diffs reviewable. When you wake up to 80 experiments, you want readable commit messages, not sprawling multi-file changes.

program.md — Where Humans Do Research

This is the most underappreciated component. program.md is a Markdown file that encodes your research strategy: what to optimize, what to explore, what constraints to respect, and what to avoid. Karpathy describes authoring this file as "programming the program.md" — you write your research direction in natural language, not Python.

A well-written program.md specifies baselines, experimental priorities, VRAM constraints, and failure-handling rules. It even hardcodes the directive: "NEVER STOP. Once the experiment loop has begun, do NOT pause."

The framing is that you are the chief scientist setting the research agenda. The AI agents are junior engineers running the lab overnight in tmux sessions. Your job is strategic; theirs is execution.

The val_bpb Metric: Why It Enables Everything

The evaluation metric is val_bpb — validation bits per byte. This is not an arbitrary choice. Bits per byte is vocabulary-size-independent, which means the agent can change tokenization strategy or model architecture between runs and still get a fair comparison.

This is what makes the ratchet work. If the metric were vocabulary-dependent (like raw cross-entropy loss), changing the tokenizer between experiments would make comparisons meaningless. With val_bpb, every experiment is evaluated on equal footing regardless of what the agent changed.

Lower val_bpb is better. The agent keeps changes that reduce it and reverts changes that increase it. Simple, unambiguous, and resistant to gaming — critical properties when an autonomous agent is making hundreds of decisions without human oversight.

Why H100 GPUs: The Math Behind Research Velocity

The fixed 5-minute training budget per experiment is what makes the overnight workflow practical. But the GPU determines how much useful training happens in those 5 minutes — and the differences are substantial:

| GPU | Cycle Time | Experiments Overnight (12h) | Training Steps per Cycle |

|---|---|---|---|

| V100 32GB | 15-25 min | 20-30 | ~150 steps |

| A100 80GB | 8-10 min | 40-45 | ~350 steps |

| H100 SXM5 80GB | 5-7 min | 70-100 | ~500 steps |

| H200 141GB | 4-6 min | 80-110 | ~550 steps |

On an H100, each 5-minute cycle completes roughly 500 training steps — enough for the model to show meaningful signal about whether a change helped or hurt. On a V100, the same wall-clock budget gets you only ~150 steps, where signal-to-noise is much worse and the agent makes less-informed keep/discard decisions.

The 80GB VRAM on H100s also matters. Autoresearch's default configuration peaks at ~45GB memory usage, leaving comfortable headroom for the agent to experiment with larger batch sizes or wider architectures without hitting out-of-memory failures that waste experiment slots.

Getting 3x more experiments from the same overnight window is not just a speed improvement — it is a fundamentally different research velocity that enables convergence on real improvements.

While Karpathy runs custom models on H100 clusters, developers who want to experiment with state-of-the-art AI models without managing GPU infrastructure can access 100,000+ models through ModelsLab's API — covering text generation, image synthesis, video, and audio with simple API calls.

Real-World Results: What the Numbers Show

Autoresearch has produced concrete, measurable improvements across multiple deployments:

Karpathy's Initial Overnight Run

In the first session, the agent ran 83 experiments and kept 15 improvements, driving val_bpb from 1.000 down to 0.975. Over a two-day extended run of ~700 experiments, roughly 20 changes were kept. These stacking improvements cut the time-to-GPT-2-quality by 11% on the nanochat leaderboard — from 2.02 hours to 1.80 hours.

Notable agent discoveries included:

- A missing scalar multiplier in QKnorm

- Value Embedding regularization that reduced overfitting

- Banded attention tuning for improved efficiency

- AdamW beta adjustments (beta2 from 0.95 to 0.98)

- Weight decay scheduling modifications

These are not trivial hyperparameter tweaks. Several are architectural insights that human researchers had overlooked.

Shopify's Production Deployment

Shopify CEO Tobi Lutke adapted autoresearch for an internal query-expansion model. After 37 experiments in 8 hours, the system achieved a 19% improvement in validation score — and a 0.8B parameter model that outperformed the previous 1.6B model it was replacing. That is a 2x parameter reduction with better performance, driven entirely by autonomous experimentation.

SkyPilot's Multi-GPU Scaling

The SkyPilot team gave Claude Code access to a Kubernetes cluster with 16 GPUs (13 H100s and 3 H200s). Over 8 hours, the agent submitted approximately 910 experiments — a 9x throughput increase over single-GPU runs. It ran factorial grids of 10-13 experiments per wave, catching interaction effects between parameters that sequential search would miss.

The agent even developed its own hardware strategy: it learned to use H200s for validation runs while screening initial ideas on H100s. Final result: val_bpb dropped from 1.003 to 0.974 — a 2.87% improvement over baseline.

Red Hat OpenShift Deployment

Red Hat ran 198 experiments on OpenShift AI with H100 GPUs, resulting in 29 kept improvements and a 2.3% improvement in validation loss with zero human intervention.

Setting Up Autoresearch: A Practical Walkthrough

Prerequisites

- Single NVIDIA GPU (H100 recommended, A100 minimum for practical results)

- Python 3.10+

- The

uvpackage manager - An LLM API key (Claude or GPT-4 — the agent uses an LLM to decide what changes to propose)

Installation

git clone https://github.com/karpathy/autoresearchcd autoresearch,[object Object],,[object Object],,[object Object],,[object Object],,[object Object],

python -c "import torch; print(torch.cuda.get_device_name(0))"

Configure the Agent

# Set your LLM API key for the agent's decision-makingexport ANTHROPIC_API_KEY="sk-ant-..."# orexport OPENAI_API_KEY="sk-..."

Write Your program.md

The default program.md is intentionally minimal. A strong research specification includes baselines, priorities, constraints, and evaluation criteria:

# Research GoalReduce val_bpb below 0.980 within 80 experiments.,[object Object],,[object Object],,[object Object],,[object Object],,[object Object],

- markdownRevert any change increasing val_bpb by more than 0.05 from current best

,[object Object],,[object Object],,[object Object],

Launch the Overnight Run

# Create a tmux sessiontmux new-session -d -s research,[object Object],,[object Object],,[object Object],,[object Object],,[object Object],

tmux attach -t research

With --max-experiments 80 and 7-minute average cycles, the run completes in roughly 9 hours.

Reading Your Morning Results

The agent logs every experiment to results.tsv. A typical morning review looks like this:

Experiment 047 | 03:14 AMChange: Reduced warmup_fraction from 0.10 to 0.05, increased peak_lr to 4e-4val_bpb_before: 0.9921 val_bpb_after: 0.9808Delta: -0.0113 ✓ KEPT,[object Object],

Experiment 049 | 03:30 AMChange: Increased batch_size from 128 to 256val_bpb_before: 0.9808 val_bpb_after: 0.9800Delta: -0.0008 ✓ KEPT

Expect most experiments to revert. That is normal — in Karpathy's runs, roughly 15-20% of experiments produced improvements. The value is in the cumulative effect of stacking those improvements over 80+ iterations.

Limitations and Trade-offs

Autoresearch is powerful but not magic. Understanding its constraints helps you use it effectively:

The ratchet cannot go backward. The system only accepts improvements. This means it cannot make a short-term sacrifice (increasing loss temporarily) to enable a larger future gain. It gets stuck in local optima.

Five minutes masks slow-converging changes. Some architectural modifications need longer training to show their true value. Changes that converge slowly but ultimately outperform will be discarded because they look worse at the 5-minute checkpoint.

Agents are conservative. Current LLMs, shaped by RLHF training, tend to propose safe, incremental changes rather than bold architectural experiments. The "creativity ceiling" means the agent is better at optimization than innovation.

Single-lineage search. Unlike population-based training, autoresearch maintains one lineage. It cannot explore multiple promising directions simultaneously (unless you scale to multiple GPUs with something like SkyPilot).

The Bigger Picture: What Autoresearch Means for ML Research

Autoresearch represents a shift in the researcher's role from experimenter to experimental designer. You no longer spend time babysitting training loops and manually logging hyperparameter changes. Instead, you invest your time in writing better program.md files — defining what questions to ask, not running the experiments yourself.

This is not just a productivity hack. It changes what kinds of research are economically viable. An individual researcher with a single H100 can now explore a configuration space overnight that previously required a team running experiments during business hours for a week.

The community response confirms the demand. Derivatives have appeared for Apple Silicon (MLX port), agent optimization (LangChain's autoresearch-agents), and domain-specific applications beyond LLM training.

For teams that want to integrate AI model capabilities into their products without building custom training infrastructure, platforms like ModelsLab provide API access to over 100,000 AI models — from text-to-image and video generation to LLM chat — letting developers focus on building applications while the infrastructure complexity is handled at the platform level.

Frequently Asked Questions

What hardware do I need to run autoresearch?

You need a single NVIDIA GPU with at least 20GB VRAM. An H100 SXM5 (80GB) is recommended for the best results — it completes training cycles in 5-7 minutes, enabling 70-100 experiments overnight. An A100 works but runs slower (~8-10 minutes per cycle, ~45 experiments overnight). Community forks exist for Apple Silicon (MLX) and AMD GPUs, but the original project targets NVIDIA CUDA.

Which LLM agent works best with autoresearch?

Autoresearch is compatible with Claude, GPT-4, and Codex-based agents. The agent needs to read code, propose modifications, and interpret numerical results. Claude Code and Cursor are commonly used in practice. The choice of agent affects the quality and creativity of proposed experiments — stronger reasoning models tend to produce more impactful changes.

How much does an overnight autoresearch run cost?

On cloud H100 instances, a 12-hour run typically costs $30-$50 depending on spot pricing. You also incur LLM API costs for the agent's decision-making — roughly 12 API calls per hour for 12 hours. Total cost including both GPU and API usage is usually under $80 for a full overnight session.

Can I use autoresearch for tasks beyond LLM training?

Yes, but with modifications. The core pattern — modify code, run a fixed-duration experiment, evaluate a scalar metric, keep or discard — applies to any ML task with a clear evaluation metric. The community has adapted it for image classification (MobileNet V3), reinforcement learning reward tuning, and agent prompt optimization. The key requirement is a fast, reliable scalar metric that the agent can optimize against.

How does autoresearch compare to traditional hyperparameter search?

Traditional methods like grid search or Bayesian optimization explore a predefined parameter space. Autoresearch is fundamentally different: the agent can modify arbitrary code, not just tune numbers. It can change activation functions, add regularization layers, restructure attention mechanisms, or modify the optimizer itself. The trade-off is that it is less systematic — it relies on the LLM's judgment rather than exhaustive search — but it can discover structural improvements that parameter sweeps would never find.