LiteLLM lets you route requests across AI providers using one SDK. You configure a provider, set the API key, and your existing logging, retry, and fallback logic applies automatically. PR #21760 adds ModelsLab as a supported image generation provider — open and mergeable as of March 2026.

This guide shows you how to configure it, which models are available, and when ModelsLab is the right choice over DALL-E or other providers.

What you'll need

- Python 3.9+ with litellm installed

- A ModelsLab API key (get one here)

- 5 minutes

Install

pip install litellm

ModelsLab image generation support is included via the open PR. To test before official release, install from the branch:

pip install --upgrade litellm

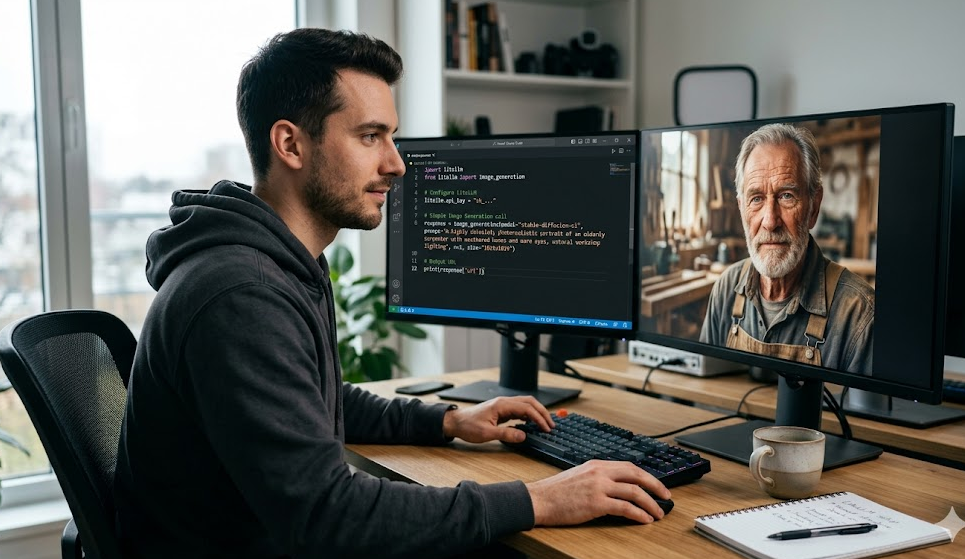

Basic image generation

Set your API key and call litellm.image_generation():

import litellmimport osos.environ["MODELSLAB_API_KEY"] = "your-api-key-here",[object Object],

image_url = response.data[0].urlprint(image_url)

The response follows the OpenAI image response format. If you're already handling DALL-E responses, this is a drop-in replacement — same object shape, same .data[0].url access pattern.

Available models

ModelsLab exposes several model families through the LiteLLM integration:

modelslab/flux-dev— FLUX.1 Dev, highest quality output, 5–10s generation timemodelslab/flux-schnell— FLUX.1 Schnell, 4 steps instead of 28, good for batch processingmodelslab/sdxl— Stable Diffusion XL, well-documented and stable for productionmodelslab/stable-diffusion-3— SD3, stronger text rendering inside imagesmodelslab/fluxgram-v1— Fluxgram V1.0, photorealistic character generation

The full model catalog is at modelslab.com/models, including video generation, voice cloning, and audio models available through separate LiteLLM endpoints.

LiteLLM proxy configuration

If you're running the LiteLLM proxy server for your team, add ModelsLab to your config.yaml:

model_list:,[object Object],,[object Object],

model_name: "image-gen-fast"litellm_params:model: "modelslab/flux-schnell"api_key: os.environ/MODELSLAB_API_KEY

Applications call the proxy with model="image-gen" without knowing the underlying provider. You can swap ModelsLab for another provider by changing one line in config — application code doesn't touch it.

Fallback handling

LiteLLM handles provider fallbacks automatically. To fall back to DALL-E 3 if ModelsLab returns an error:

import litellmresponse = litellm.image_generation(model="modelslab/flux-dev",prompt="A developer reviewing API logs on a dark terminal screen",n=1,size="1024x1024",fallbacks=["openai/dall-e-3"])

The retry logic, error handling, and provider selection are handled by LiteLLM. Your application code stays the same regardless of which provider ultimately serves the request.

Observability

If you've set up LiteLLM logging already (Langfuse, Helicone, W&B, etc.), image generation requests from ModelsLab flow through the same pipeline automatically:

import litellm,[object Object],,[object Object],,[object Object],

response = litellm.image_generation(model="modelslab/flux-dev",prompt="A futuristic city skyline rendered in 4K",n=1,size="1024x1024")

Cost tracking, latency metrics, and prompt logging work the same way as your LLM calls. No additional configuration needed for image generation specifically.

Pricing comparison

ModelsLab image generation API pricing:

- FLUX.1 Dev: $0.025–$0.05 per image (768px to 1024px)

- FLUX.1 Schnell: $0.009–$0.015 per image

- SDXL: $0.006–$0.012 per image

- Stable Diffusion 3: $0.020–$0.040 per image

For reference: DALL-E 3 standard costs $0.040 per 1024x1024 image. FLUX.1 Schnell via ModelsLab is roughly 3x cheaper at comparable quality for most product generation use cases. FLUX.1 Dev matches or exceeds DALL-E 3 quality on photorealistic subjects at similar per-image cost.

When to use this setup

ModelsLab through LiteLLM works well when:

- You already use LiteLLM for LLM routing and want to consolidate image generation in the same SDK

- You're generating images at volume and need cost control (FLUX.1 Schnell at $0.009/image adds up at scale)

- You need access to open models — FLUX, SDXL, SD3 — without managing GPU infrastructure

- You want provider flexibility without changing application code

Less ideal if you specifically need Azure OpenAI integrated billing or native DALL-E content policy filtering at the infrastructure level.

Get started

Create a free ModelsLab API key — trial credits included, no card required. The LiteLLM PR (#21760) is open and mergeable. Questions land fast in the ModelsLab Discord #developers channel.