Mx5 GGUF 7GB V1

by ModelsLabThis is a quantized version of my Flux model to run on lower-end graphics cards.

Thanks to @https://civitai.com/user/chrisgoringe243 for quantizing this, it is really good quality for such a small model.

There are larger sized GGUF versions available here: https://huggingface.co/ChrisGoringe/MixedQuantFlux/tree/main

for mid-range graphics cards.

mx5gguf7gbv1Input

Per image generation will cost 0.0047$

For premium plan image generation will cost 0.00$ i.e Free.

Output

Unknown content type

Related Models

Discover similar models you might be interested in

Mx5 GGUF 7GB V1 Readme

This is a quantized version of my Flux model to run on lower-end graphics cards.

Thanks to @https://civitai.com/user/chrisgoringe243 for quantizing this, it is really good quality for such a small model.

There are larger sized GGUF versions available here: https://huggingface.co/ChrisGoringe/MixedQuantFlux/tree/main

for mid-range graphics cards.

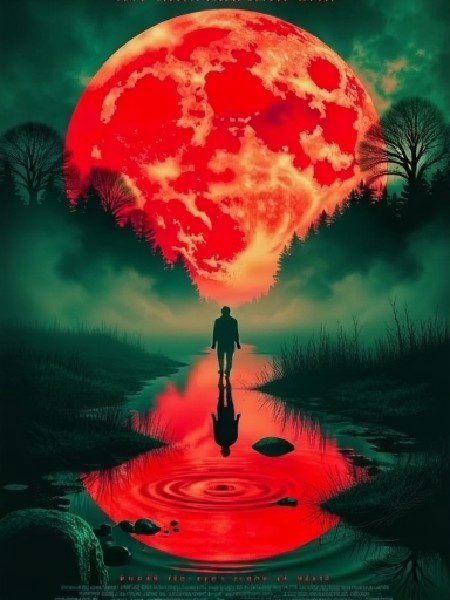

![YFG Soares [FLUX] - v1.0](https://images.stablediffusionapi.com/?Image=https://image.civitai.com/xG1nkqKTMzGDvpLrqFT7WA/f0600c68-c80b-4a52-bc81-2da39ba36740/width=832/54470689.jpeg)

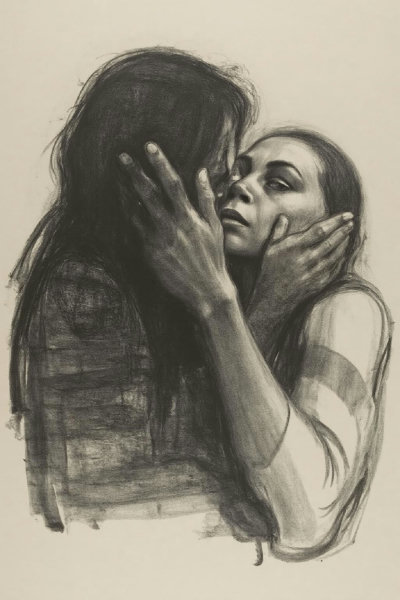

![CivBot: CivitAI Bot Mk 3 [Flux & Illustrious & Pony & SD1.5] - Flux](https://images.stablediffusionapi.com/?Image=https://image.civitai.com/xG1nkqKTMzGDvpLrqFT7WA/18c9408c-20ca-4e13-a0b9-e26da0e34985/width=832/68392858.jpeg)

![Neon Noir [FL/XL/IL] - FLUX](https://images.stablediffusionapi.com/?Image=https://image.civitai.com/xG1nkqKTMzGDvpLrqFT7WA/d2c9ebae-dee8-44bc-8cb8-7803b298d4ce/width=1536/24651338.jpeg)

![80s Hand drawn animation / cartoon style for backgrounds, illustrations and arts [Flux] - v1.0](https://images.stablediffusionapi.com/?Image=https://image.civitai.com/xG1nkqKTMzGDvpLrqFT7WA/3943a9e7-84f8-40ee-9c94-ebb35929b885/width=1024/38827191.jpeg)

![YFG Vintage Photo [Flux] - v49.0](https://images.stablediffusionapi.com/?Image=https://image.civitai.com/xG1nkqKTMzGDvpLrqFT7WA/30ed4294-51b1-40ba-a723-5806752801f7/width=832/66887520.jpeg)